Basic HA Installation - Tarball

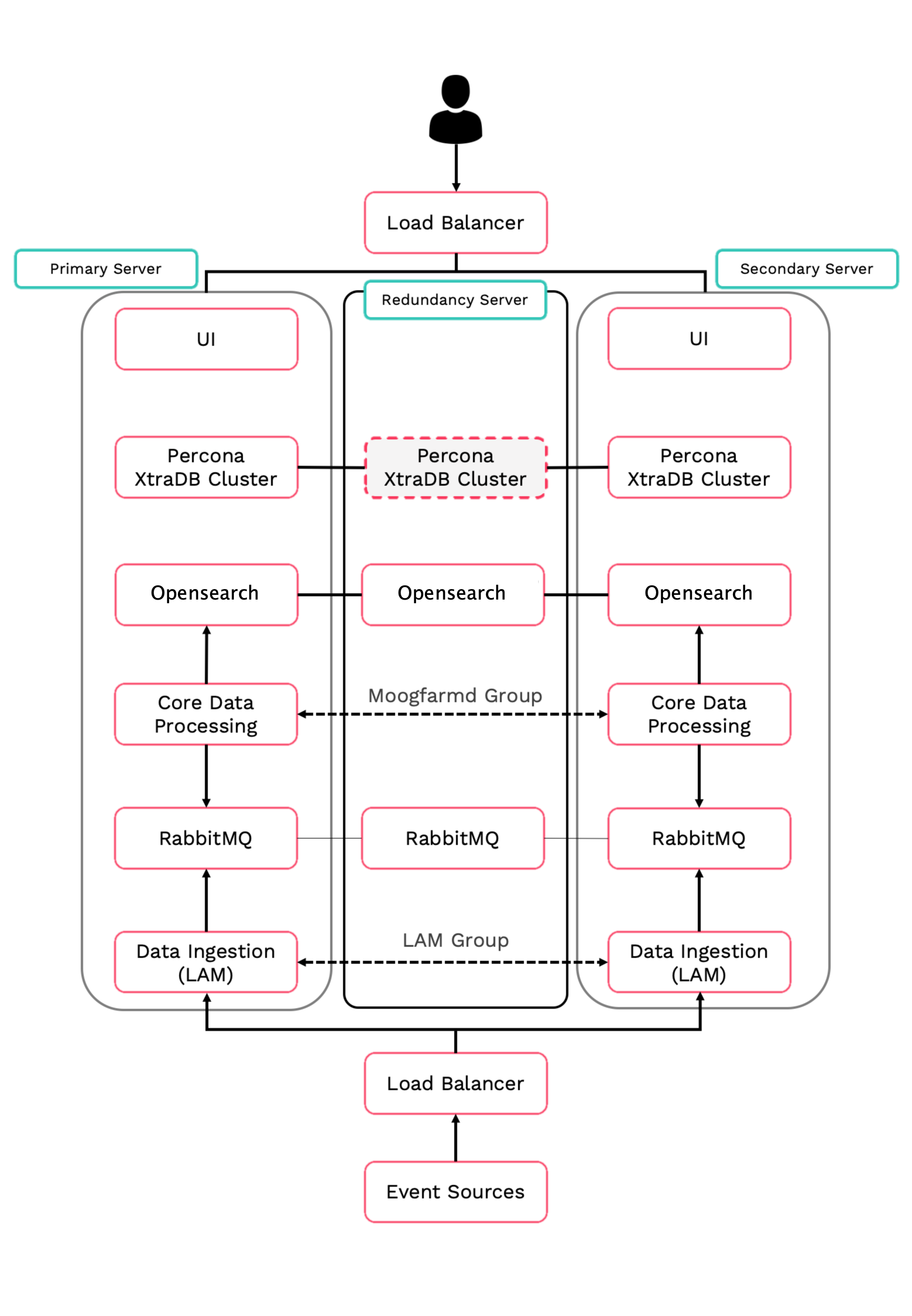

This topic describes the basic High Availability (HA) installation for Moogsoft Onprem using tarball. This installation configuration has three servers; two for the primary and secondary clusters, and a redundancy server.

This topic describes how to perform the following tasks for the core Moogsoft Onprem components:

Stage the installation files on all servers.

Install the Moogsoft Onprem packages and set the environment variables.

Set up the Percona XtraDB database and HA Proxy.

Configure the RabbitMQ message broker and Opensearch search service.

Configure high availability for the Moogsoft Onprem core processing components.

Initialize the user interface (UI).

Configure high availability for data ingestion.

Before you begin

Before you start the tarball basic HA installation of Moogsoft Onprem:

Familiarize yourself with the single-server deployment process: Install Moogsoft Onprem and Upgrade Moogsoft Onprem.

Read the High Availability Overview and the HA Reference Architecture.

Verify that the hosts can access the required ports on the other hosts in the group. See HA Reference Architecture for more information.

Complete either the Moogsoft Onprem Offline Tarball Pre-installation or Moogsoft Onprem Online Tarball Pre-installation instructions.

Important

Enabling the "latency performance" RHEL profile is strongly recommended. This profile allows RabbitMQ to operate much more efficiently so that throughput is increased and smoothed out.

For more information on performance profiles, see https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux/8/html/monitoring_and_managing_system_status_and_performance/getting-started-with-tuned_monitoring-and-managing-system-status-and-performance

Enable the profile by running the following command as root:

tuned-adm profile latency-performance

This setting will survive machine restarts and only needs to be set once.

Prepare to install

Before you install Moogsoft Onprem:

Log in as the Linux user on the primary, secondary and redundancy servers and go to the working directory on each server.

Make sure that the directories where you will install Moogsoft Onprem meet the size requirements stated in the Moogsoft Onprem Offline Tarball Pre-installation or Moogsoft Onprem Online Tarball Pre-installation instructions. Some files such as the Percona database and Opensearch files will be installed into the Linux user's

HOME/installdirectory.Remove existing environment variables such as

$MOOGSOFT_HOMEfrom previous installations.Log in as the Linux user for the primary, secondary and redundancy servers, and on each one, install the dependencies as needed by following the instructions here: Moogsoft Onprem Offline Tarball Pre-installation

Install database and Moogsoft Onprem files on the primary server

Run the following commands on the primary, secondary and redundancy servers that will house a database node:

VERSION=9.2.0 mkdir -p ~/install cd /opt/moogsoft/${VERSION}/preinstall_files cp percona-xtrabackup*.tar.gz ~/install cp Percona-XtraDB-Cluster*.tar.gz ~/installRun the Percona install script on the primary database server. Substitute the IP addresses of your servers and choose the password for the sstuser.

bash install_percona_nodes_tarball.sh -p -i <primary_ip>,<secondary_ip>,<redundancy_ip>

To verify that the Percona install was successful, run the following command on the primary server. Substitute the IP address of your primary server:

curl http://<primary_ip>:9198

If successful, you see the following message : (it can take a few minutes for the node to be synced):

Percona XtraDB Cluster Node is synced.

Now that you've installed the Percona database, you can install Moogsoft Onprem. Go to your working directory on the primary server, and extract the Moogsoft Onprem distribution archive:

tar -xf moogsoft-enterprise-${VERSION}.tgzRun the installation script in your primary server working directory to install Moogsoft Onprem:

bash moogsoft-enterprise-install-${VERSION}.shWhen prompted, enter the working directory to install Moogsoft Onprem on the primary server. For example,

/opt/moogsoft/aiops. The script guides you through the installation process. You can modify the default installation directory displayed for your environment.Edit the

.bashrcfile to set theMOOGSOFT_HOMEvariable and update the PATH variable to include various directories underMOOGSOFT_HOMEthat contain binaries and scripts.For example:

export MOOGSOFT_HOME=/opt/moogsoft/aiops export PATH=$PATH:$MOOGSOFT_HOME/bin:$MOOGSOFT_HOME/bin/utils:/$MOOGSOFT_HOME/cots/erlang/bin:$MOOGSOFT_HOME/cots/rabbitmq-server/sbin

And then source the

~/bashrcfile:source ~/.bashrc

Configure the Tool Runner to execute locally:

sed -i 's/# execute_locally: false,/,execute_locally: true/1' $MOOGSOFT_HOME/config/servlets.conf

Initialize Moogsoft Onprem on the primary server

Initialize the database on the primary server:

moog_init_db.sh -qIu root

When prompted for a password, enter the password for the root database user instead of the Linux user. If you are installing Percona on this machine for the first time, leave the password blank and press Enter to continue. The script prompts you to accept the End User License Agreement (EULA) and guides you through the initialization process.

Install Moogsoft Onprem files on the secondary and redundancy servers

Run the Percona install script on the secondary and redundancy servers. Substitute the IP addresses of your servers and use the same password as for the primary server.

bash install_percona_nodes_tarball.sh -i <primary IP address>,<secondary IP address>,<redundancy IP address>

To verify that the Percona install was successful, run the following command on the secondary and redundancy servers. Substitute the IP address of your primary server:

curl http://<primary IP address>:9198

If successful, you see the following message:

Percona XtraDB Cluster Node is synced

It takes a moment for the secondary and redundancy servers to sync. If you do not get this message, wait for a few moments and then try again.

Now that you've installed the Percona database, you can install Moogsoft Onprem.Go to your working directory in the secondary and redundancy servers, and extract the Moogsoft Onprem distribution archive:

source ~/.bashrc cd /opt/moogsoft/${VERSION} tar -xf moogsoft-aiops-${VERSION}.tgzRun the installation script in your working directory in both servers to install Moogsoft AIOps:

bash moogsoft-aiops-install-${VERSION}.shWhen prompted, enter the directory to install AIOps. For example,

/opt/moogsoft/aiops. The script guides you through the installation process. You can modify the default installation directory displayed for your environment.Edit the

.bashrcfile to set theMOOGSOFT_HOMEvariable and update the PATH variable to include various directories underMOOGSOFT_HOMEcontaining binaries and scripts.For example:

export MOOGSOFT_HOME=/opt/moogsoft/aiops export PATH=$PATH:$MOOGSOFT_HOME/bin:$MOOGSOFT_HOME/bin/utils:/$MOOGSOFT_HOME/cots/erlang/bin:$MOOGSOFT_HOME/cots/rabbitmq-server/sbin

Source the

~/.bashrcfile:source ~/.bashrc

Configure the Tool Runner to execute locally:

sed -i 's/# execute_locally: false,/,execute_locally: true/1' $MOOGSOFT_HOME/config/servlets.conf

Install HA Proxy on primary and secondary servers

Install HA Proxy on the primary and secondary servers (root permission required).

Run the following command, using your chosen value for

MOOGSOFT_HOME. Substitute the IP addresses of your servers.export MOOGSOFT_HOME=/opt/moogsoft/aiops $MOOGSOFT_HOME/bin/utils/haproxy_installer.sh -l 3309 -c -i <primary_ip>:3306,<secondary_ip>:3306,<redundancy_ip>:3306

Perform the remaining steps as the moogsoft Linux user

Check that you can connect to MySQL to confirm successful installation:

mysql -h localhost -P3309 -u root

Set up RabbitMQ on all servers

Initialize and configure RabbitMQ on all three servers.

Primary, Secondary and Redundancy servers:

Run the following command. Substitute a name for your zone. You must use the same zone name for all servers.

moog_init_mooms.sh -pz <my_zone>

Stop RabbitMQ on the secondary and redundancy servers:

process_cntl --process_name rabbitmq stop

The primary server erlang cookie is located in the Linux user's

HOMEdirectory:~/.erlang.cookie. The erlang cookie must be the same for all RabbitMQ nodes.Replace the erlang cookie on the secondary and redundancy servers with the erlang cookie from the primary server. You may need to change the file permissions on the secondary and redundancy erlang cookies first to allow those files to be overwritten.

After copying the cookie, as the Linux user, make the cookies on the secondary and redundancy servers read-only. For example:

cd ~ chmod 406 ~/.erlang.cookie mv .erlang.cookie .erlang.cookie.orig scp moogsoft@<PRIMARY_IP>:.erlang.cookie . chmod 400 .erlang.cookie

Secondary and Redundancy servers:

Restart RabbitMQ on the secondary and redundancy servers and join those servers to the cluster. Substitute the short hostname of your primary server and the name of your zone.

The short hostname is the full hostname excluding the DNS domain name. For example, if the hostname is

ip-172-31-82-78.ec2.internal, the short hostname isip-172-31-82-78. To find out the short hostname, runrabbitmqctl cluster_statuson the primary server.The restart command differs depending on whether you are a root or a non-root user:

Root:

systemctl restart rabbitmq-serverNon-root:

process_cntl --process_name rabbitmq restart

<restart command> rabbitmqctl stop_app rabbitmqctl join_cluster rabbit@<primary_short_hostname> rabbitmqctl start_app rabbitmqctl set_policy -p "<ZONE_NAME>" qq-overrides ".+\.HA" '{"delivery-limit": -1}' --apply-to "quorum_queues"Run

rabbitmqctl cluster_statusto get the cluster status. Example output is as follows with extra less important output marked as '...' :Cluster status of node rabbit@secondary ... ... Basics Cluster name rabbit@secondary Disk Nodes rabbit@primary rabbit@secondary Running Nodes rabbit@primary rabbit@secondary Versions ...

Set up Opensearch on all servers

Follow the Opensearch Clustering guide here: Opensearch Clustering Guide

Configure Moogsoft Onprem

Configure Moogsoft Onprem by editing the Moogfarmd and system configuration files on the primary and secondary servers.

Primary and Secondary servers:

Make a copy of the

$MOOGSOFT_HOME/config/system.conffile:cp -p $MOOGSOFT_HOME/config/system.conf $MOOGSOFT_HOME/config/system.conf.orig

Edit

$MOOGSOFT_HOME/config/system.confand set the following properties. Substitute the name of your RabbitMQ zone, the server hostnames, and the cluster names:"mooms" : { ... "zone" : "<my_zone>", "brokers" : [ {"host" : "<primary_hostname>", "port" : 5672}, {"host" : "<secondary_hostname>", "port" : 5672}, {"host" : "<redundancy_hostname>", "port" : 5672} ], ... "cache_on_failure" : true, ... "search" : { ... "nodes" : [ {"host" : "<primary_hostname>", "port" : 9200}, {"host" : "<secondary_hostname>", "port" : 9200}, {"host" : "<redundancy_hostname>", "port" : 9200} ] ... "failover" : { "persist_state" : true, "hazelcast" : { "hosts" : ["<primary_hostname>","<secondary_hostname>"], "cluster_per_group" : true } "automatic_failover" : true, } ... "ha": { "cluster": "<cluster_name, primary or secondary>" }Make a copy of the

$MOOGSOFT_HOME/config/moog_farmd.conffile:cp -p $MOOGSOFT_HOME/config/moog_farmd.conf $MOOGSOFT_HOME/config/moog_farmd.conf.orig

Uncomment and edit the following properties in

$MOOGSOFT_HOME/config/moog_farmd.conf. Note the importance of the initial comma. Delete the cluster line in this section of the file:Primary server

, ha: { group: "moog_farmd", instance: "moog_farmd", default_leader: true, start_as_passive: false }Secondary server

, ha: { group: "moog_farmd", instance: "moog_farmd", default_leader: true, start_as_passive: false }Start Moogfarmd on the primary and secondary servers:

process_cntl --process_name moog_farmd start

Initialize the user interface

Run the initialization script moog_init_ui.sh on the primary server. Substitute the name of your RabbitMQ zone and primary hostname.

When asked if you want to change the configuration hostname, say yes and enter the public hostname/domain name used in the URL to access Moogsoft Onprem via browser. If the URL uses a different hostname/domain name (e.g., an alias) the system will reject your login.

Primary server:

moog_init_ui.sh -twfz <MY_ZONE> -c <primary_hostname>:15672 -m <primary_hostname>:5672 -s <primary_hostname>:9200 -d <primary_hostname>:3309 -n

Edit the servlets settings on the primary server in the file $MOOGSOFT_HOME/config/servlets.conf. Note the importance of the initial comma.

,ha :

{

instance: "servlets",

group: "servlets_primary",

start_as_passive: false

}Restart Apache Tomcat and Moogfarmd on the primary server:

process_cntl --process_name apache-tomcat restart process_cntl --process_name moog_farmd restart

Run the initialization script moog_init_ui.sh on the secondary server. Substitute the name of your RabbitMQ zone.

When asked if you want to change the configuration hostname, say yes and enter the public hostname/domain name used in the URL to access Moogsoft Onprem via browser.

Secondary server:

moog_init_ui.sh -twfz MY_ZONE -c <SECONDARY_HOSTNAME>:15672 -m <SECONDARY_HOSTNAME>:5672 -s <SECONDARY_HOSTNAME>:9200 -d <SECONDARY_HOSTNAME>:3309 -n

Edit the servlets settings in the secondary server $MOOGSOFT_HOME/config/servlets.conf file. Note the importance of the initial comma.

,ha :

{

instance: "servlets",

group: "servlets_secondary",

start_as_passive: false

}Restart Apache Tomcat and Moogfarmd on the primary server secondary server:

process_cntl --process_name apache-tomcat restart process_cntl --process_name moog_farmd restart

Enable HA for LAMs

There are two types of HA configuration for LAMs; Active/Active and Active/Passive:

Receiving LAMs that listen for events are configured as Active/Active. For example, the REST LAM.

Polling LAMs are configured as Active/Passive. For example, the SolarWinds LAM.

Every LAM has its own configuration file under $MOOGSOFT_HOME/config/. This example references rest_lam.conf and solarwinds_lam.conf.

Primary and Secondary servers

Edit the HA properties in the primary and secondary servers' LAM configuration files. Moogsoft Onprem automatically manages the active and passive role for the LAMs in a single process group:

# Receiving LAM (Active / Active)

# Configuration on Primary

ha:

{

group : "rest_lam_primary",

instance : "rest_lam",

duplicate_source : false

},

...

# Configuration on Secondary

ha:

{

group : "rest_lam_secondary",

instance : "rest_lam",

duplicate_source : false

},

# Polling LAM (Active / Passive)

# Configuration on Primary

ha:

{

group : "solarwinds_lam",

instance : "solarwinds_lam",

only_leader_active : true,

default_leader : true,

accept_conn_when_passive : false,

duplicate_source : false

},

...

# Configuration on Secondary

ha:

{

group : "solarwinds_lam",

instance : "solarwinds_lam",

only_leader_active : true,

default_leader : false,

accept_conn_when_passive : false,

duplicate_source : false

},

Start the LAMs:

process_cntl --process_name restlamd restart process_cntl --process_name solarwindslamd restlamd restart

Run the HA Control command line utility ha_cntl -v to check the status of the LAMS:

Moogsoft AIOps Version{VERSION}

(C) Copyright 2012-2020 Moogsoft, Inc.

All rights reserved.

Executing: ha_cntl

Getting system status

Cluster: [PRIMARY] active

...

Process Group: [rest_lam_primary] Active (no leader - all can be active)

Instance: [rest_lam] Active

...

Process Group: [solarwinds_lam] Active (only leader should be active)

Instance: [solarwinds_lam] Active Leader

Cluster: [SECONDARY] partially active

...

Process Group: [rest_lam_secondary] Active (no leader - all can be active)

Instance: [rest_lam] Active

...

Process Group: [solarwinds_lam] Active (only leader should be active)

Instance: [solarwinds_lam] Passive

For more information, see the HA Control Utility Command Reference.